What Is Agentic AI? Explained Without Hype

Agentic AI has become one of those terms that sounds impressive enough to end conversations. People nod, slide it into decks, and move on. The problem is that most of what’s being described isn’t agentic, and most systems being shipped under that label are just old automation patterns wearing a new LLM-shaped hat. After a few years of watching brittle chatbots get rebranded as “autonomous systems,” you start to see the same mistakes repeat. The disappointment that follows isn’t because agentic AI doesn’t work. It’s because we keep explaining it in a way that hides where the real complexity lives.

I’m not interested in adding another definition to the pile. I’m more interested in why so many teams build these systems, feel uneasy about them, and can’t quite articulate what went wrong.

what is agentic AI in simple terms

In simple terms, agentic AI is about responsibility, not intelligence. An agentic system is one you trust to decide what to do next, not just how to respond. That distinction sounds subtle, but it changes everything about how the system is designed, deployed, and monitored.

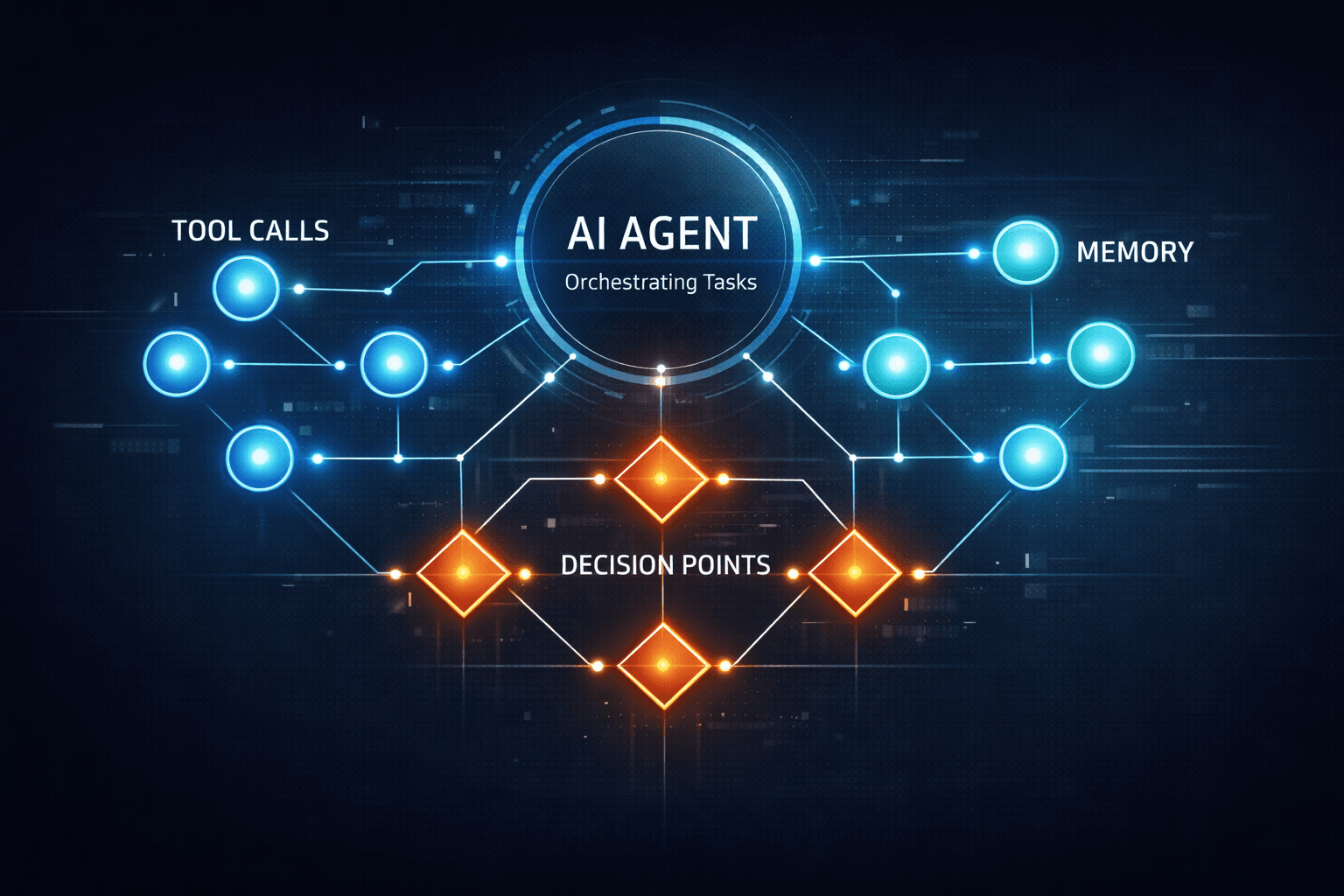

Most AI systems today are reactive. They take an input, transform it, and return an output. Even when an LLM is involved, the interaction is still transactional. You prompt, it responds, the loop ends. Agentic AI breaks that loop open. The system receives a goal, evaluates its current state, decides on an action, observes the result, and then decides again. The intelligence is not just in language generation, but in sequencing, prioritization, and recovery.

This is where people get tripped up. They assume agentic AI means “the model is smarter.” In practice, it usually means the model is average, but the surrounding system is disciplined. Memory exists. State is tracked. Tools are invoked deliberately. Constraints are enforced. Without that scaffolding, autonomy is just a word.

When someone asks for a simple explanation, I usually say this: agentic AI is software that doesn’t wait for you to tell it the next step. It figures that out itself, within boundaries you define. Everything else is implementation detail.

The uncomfortable truth about autonomy

Here’s the part that marketing slides don’t mention. Autonomy is expensive. Not financially at first, but cognitively. The moment you let a system decide its own next action, you inherit all the problems humans deal with: partial information, conflicting objectives, and unintended consequences.

This is why so many “AI agents” demos look magical for five minutes and then quietly disappear. They work perfectly in the happy path. They fall apart the moment something unexpected happens. A rate limit. A malformed API response. A user who changes their mind halfway through a task. Real autonomy lives or dies on how those moments are handled.

In production systems we’ve built at Agents Arcade, the most time-consuming part has never been the prompt. It’s the edge cases. The retries. The decision to stop. Knowing when to ask a human for help instead of confidently marching toward the wrong outcome. These are not LLM problems. They’re systems problems.

how agentic AI systems work in practice

In practice, agentic AI systems are orchestration engines with a language model at the center, not the top. The LLM is responsible for reasoning and interpretation, but it is never alone. It operates inside a loop that enforces structure.

The loop usually starts with a goal. That goal might be explicit, like “resolve this customer issue,” or implicit, like “keep this workflow moving.” The system evaluates its current state, including memory from previous steps. It then plans an action. That action is almost always a tool call, not a text response. A database query, an API request, a search operation, or even a call to another agent.

Once the action is executed, the system observes the result and updates its state. This is where most naive implementations fail. They treat tool output as text to be summarized instead of signal to be reasoned over. In robust systems, tool results influence future decisions directly. If the data is incomplete, the agent adjusts its plan. If an action fails, it retries or escalates.

Frameworks like LangGraph exist because this loop is hard to manage once things get non-linear. Branching paths, conditional execution, and parallel steps quickly turn into a mess if you rely on ad-hoc glue code. Similarly, the OpenAI Agents SDK formalizes patterns that many teams were already inventing badly: structured tool calling, memory scopes, and explicit agent boundaries.

The key takeaway is this: agentic AI is less about clever prompts and more about explicit control flow. If you can’t draw your system as a state machine, you probably don’t understand it well enough to trust it.

At this point, it’s worth pausing and acknowledging a realization many teams arrive at late: building agents is closer to building distributed systems than building chatbots. That insight is explored in much more depth in AI Agents: A Practical Guide for Building, Deploying, and Scaling Agentic Systems , and it’s usually the moment when architects stop underestimating the work involved.

Agentic AI vs. traditional automation

Traditional automation is deterministic. You define a sequence, add conditions, and the system follows them blindly. When something changes, you update the rules. This works well for stable domains and predictable inputs. It collapses when the world becomes fuzzy.

Agentic AI thrives in that fuzziness, but at a cost. Instead of rules, you define goals and constraints. Instead of branches, you allow reasoning. Instead of exhaustive coverage, you accept probabilistic behavior. That tradeoff is not always worth it.

I’ve seen teams replace perfectly good automation with agents because it felt modern, only to reintroduce rigid checks after a few bad incidents. The smarter move is often hybrid. Let traditional automation handle the boring, high-confidence paths. Insert agentic components where judgment is actually required.

This is where comparisons with chatbots become misleading. A chatbot can feel conversational and still be fundamentally non-agentic. It answers questions. It doesn’t pursue outcomes. The difference is explored well in AI Agents vs Chatbots: What’s the Real Difference? , but the short version is this: if your system never acts without being asked, it’s not an agent.

A brief digression on “thinking” models

There’s a temptation right now to pin everything on model capability. Better reasoning models, longer context windows, chain-of-thought exposure. These things matter, but they don’t fix bad architecture.

We once swapped a mid-tier model for a much stronger one in an existing agent workflow, expecting dramatic improvements. What we got instead was the same failures, just phrased more eloquently. The agent still made the wrong decision because the state it was reasoning over was incomplete. Garbage in, articulate garbage out.

The industry obsession with “thinking harder” misses a more boring truth: agents fail because they don’t know enough about their own situation. Memory and state management matter more than eloquence. Explicit representations beat hidden reasoning every time.

This digression matters because it brings us back to reality. Agentic AI is not magic. It’s software. And software behaves according to what you give it, not what you hope it understands.

Memory, state, and the illusion of continuity

One of the most misunderstood aspects of agentic AI is memory. People talk about it as if it’s a personality trait. In reality, it’s an engineering decision.

Short-term memory is about context. What just happened? What tools were called? What assumptions were made? Long-term memory is about accumulation. What patterns have we seen? What preferences exist? What constraints have proven reliable?

If you mix these carelessly, you get agents that hallucinate consistency. They believe things about users or systems that are no longer true. If you separate them thoughtfully, you get systems that feel coherent without being rigid.

State management is equally critical. An agent that doesn’t know whether it’s halfway through a task or just starting will behave erratically. This is why stateless serverless deployments often struggle with complex agents. Persistence is not optional once autonomy enters the picture.

These concerns are rarely mentioned in high-level explanations, but they’re where most production incidents originate. Memory leaks aren’t just for low-level languages anymore. They show up as bad decisions weeks later.

Decision-making systems, not text generators

The biggest mental shift required to work with agentic AI is to stop thinking of LLMs as text generators. In agentic systems, language is an interface, not the product.

The model interprets goals, evaluates options, and selects actions. The output you care about is not the prose, but the decision. Was the right tool chosen? Was the escalation appropriate? Was the task terminated at the correct point?

This is why evaluation is so difficult. You can’t just score responses for relevance or tone. You have to judge behavior over time. Did the system converge on a solution? Did it respect constraints? Did it fail safely?

If you’re coming from traditional ML metrics, this can feel uncomfortable. If you’re coming from DevOps, it should feel familiar. You’re observing a system under load and looking for stability.

Failure modes in AI systems are design failures

Every agentic system will fail. The question is how, and whether you notice in time.

Common failure modes include infinite loops, overconfidence, silent degradation, and misplaced autonomy. None of these are fixed by better prompts. They’re mitigated by guardrails, timeouts, and human-in-the-loop checkpoints.

Human-in-the-loop is not an admission of weakness. It’s a recognition that some decisions carry too much risk to automate fully. The best systems I’ve seen treat humans as tools of last resort, invoked deliberately when uncertainty crosses a threshold.

This is also where business and technical views collide. From a business perspective, autonomy sounds like cost savings. From a technical perspective, it sounds like risk. Reconciling those views requires honesty about what the system can and cannot be trusted to do, a tension explored well in What Is an AI Agent? (Business vs Technical View).

Why most explanations miss the point

Most explanations of agentic AI focus on what it could do, not what it takes to make it reliable. They talk about autonomy as a feature instead of a responsibility. They celebrate demos and ignore maintenance.

The reality is less glamorous. Building agentic AI means accepting operational burden. Logging becomes critical. Observability is mandatory. Rollbacks matter. You don’t just deploy an agent and walk away. You babysit it like any other complex system, except this one can surprise you.

Once you internalize that, the hype fades and something more useful takes its place. Respect. Respect for the problem, for the tools, and for the discipline required to make autonomy boring. Because boring is what you want in production.

If you want a smoother path forward, get support from Agents Arcade today.

Majid Sheikh is the CTO and Agentic AI Developer at Agents Arcade, specializing in agentic AI, RAG, FastAPI, and cloud-native DevOps systems.